If you block out the noise coming out of Washington D.C., as most investors do, the fourth and final full week of September 2019 saw a lot of good news for the U.S. economy. Even the ever uber-pessimistic Tyler Durden grudgingly acknowledged as much before setting the stage for future doom:

The last few weeks have seen a dramatic surge in US macro data surprises (to the upside), dramatically diverging from the rest of the world (and almost single-handedly improving the global data)...

ZeroHedge chalks much of that effect to the U.S. government's "Use It Or Lose It" end-of-fiscal-year spending, which they argue is having a short term stimulative effect on the U.S. economy. Which will soon come to an end (this is ZeroHedge, after all)!

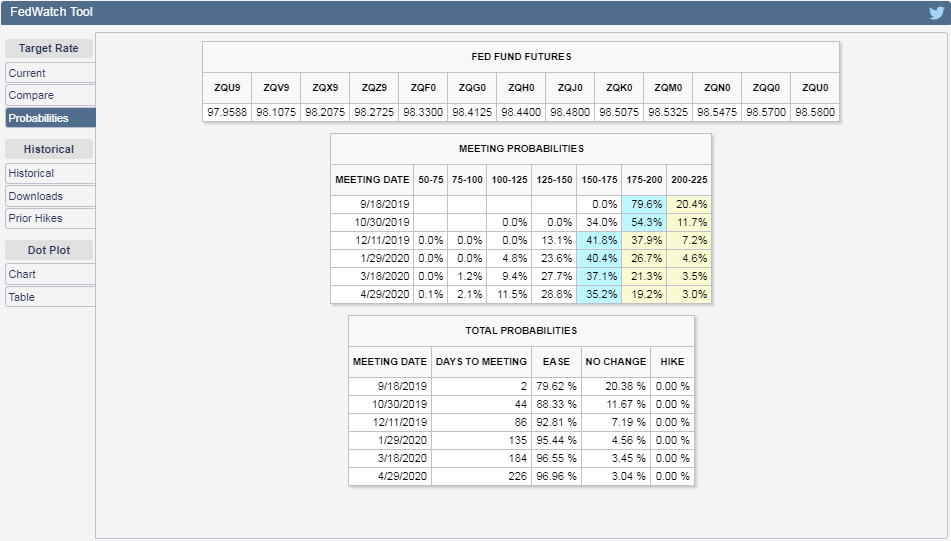

But the Tylers are good observers of the market and we can confirm the cumulative effect of the upside economic data surprises with the CME Group's FedWatch Tool, which not only no longer indicates any rate cuts after 2019, but also indicates the falling probability of a rate cut in the fourth quarter of 2019 (2019-Q4):

That's good news, but in the chaotic math of how stock prices work, that means investors are shifting their forward-looking attention inward from the distant future horizon of 2020-Q1 toward 2019-Q4, which means that stock prices would fall given the expectations associated with that quarter.

That may be the start of the sixth Lévy flight event of 2019, although at this point, we may see investors opt to split their focus between 2019-Q4 and 2020-Q1, where we might not see another full quantum-like shift from the trajectory for 2020-Q1 to the alternate trajectory for 2019-Q4 like we did earlier this quarter.

If we do, it will be associated with a much larger drop in stock prices than occurred in early August 2019, because the expectations for the change in the year-over-year rate of growth of future dividends for the S&P 500 has changed, with a larger gap opening up between the alternate trajectories associated with investors focusing on either 2019-Q4 or 2020-Q1.

If the flow of upside surprises slows and negative surprises start to take hold however, we could see stock prices rise with the increased probability of a rate cut in 2020-Q1. Or the picture for the economy may continue to improve, which would seemingly paradoxically send stock prices lower as the potential for one last rate cut before the end of 2019 becomes a point of focus.

Or investors could have reason to shift their attention to another point of time altogether, with the trajectory of the S&P 500 shifting accordingly. No matter what, the current market environment is ripe for stock prices to experience a lot of potential volatility. If you know which way it's going to go, more power to you!

Speaking of the current market environment, here's a sampling of market-moving news headlines from the fourth week of September 2019:

- Monday, 23 September 2019

- Oil rises about 1% on concerns about return of Saudi output

- Chinese farm official says 'good outcome' from trade talks

- China buys about 10 cargoes of U.S. soybeans after trade talks

- Chinese U.S. homebuying to hit eight-year low, says leading property site

- Fed's Bullard: U.S.-China relations probably had to come to a head

- Bigger trouble developing in the Eurozone:

- Euro zone economic rebound not in sight: ECB's Draghi

- German private sector shrinks in September for first time in over six years: PMI

- Bigger stimulus developing in China:

- The Fed's $400 Billion Plan to Bailout the Repo Market

- NY Fed's Williams says NY Fed actions had desired effect of reducing market strains

- Fed's Bullard says prefers standing repo facility to ensure smooth markets in the future

- Wall Street ends flat as mixed economic data signals caution

- Tuesday, 24 September 2019

- Wednesday, 25 September 2019

- Oil falls about 1% on surprise U.S. crude build, Saudi crude output

- India's stuttering economy hits global oil demand

- Lower mortgage rates stimulate lethargic U.S. housing market

- Fed minions pat selves on back:

- Evans says two rate cuts have Fed 'well-positioned' at this point

- Evans: Larger balance sheet may avoid future repo market problems

- Trump impeachment inquiry not a problem for the Fed: Bullard

- Wall Street bounces back as investors shrug of impeachment risk

- Thursday, 26 September 2019

- Oil steady as Middle East conflict fears support, Trump probe weighs

- China's top diplomat says Beijing willing to buy more U.S. products

- Fed minions issue statements on life, the universe, everything:

- Fed's Kashkari: U.S. economy needs lower interest rates

- Fed's Clarida says inflation expectations in line with mandate

- Dallas Fed's Kaplan: U.S. needs more, not less, immigration for economic growth

- Dallas Fed's Kaplan says global economy 'fragile' but U.S. will 'skate through'

- U.S. Fed should target repo rate to reduce market volatility: ex-Fed officials

- Wall St dips as whistleblower report adds to investor caution

- Friday, 27 September 2019

Barry Ritholtz outlines no fewer than eight positives and negatives each that he found in the past week's economics and market-related news.

Scandal erupted on the Australian edition of The Bachelor when the Bachelor, 32-year old astrophysicist Matt Agnew, asked 28-year old chemical engineer Chelsea McLeod to solve math problems in order to find the combination numbers of a safe on their third and final date before the show's conclusion.

We're not making this up. Here's a video excerpt from the show:

The task greatly upset the show's fan base. Here's the story from the Daily Mail:

Since day one, Bachelor Matt Agnew has embraced the fact Chelsie McLeod shares his same passion for science.

But fans were left confused during the pair's final date on Wednesday's semi-final, as the astrophysicist, 32, tasked the chemical engineer, 28, with math equations.

'This would literally be my worst nightmare!' one fan Tweeted, after Matt described the maths problem as a 'fun activity' for Chelsie to complete.

'I cannot believe you're making me do this right now!' Chelsie said to Matt, which viewers appeared to agree with.

Matt handed Chelsie a pen and paper to solve a math problem to open a safe, which housed a present for the star.

At least it ended well. Chelsie correctly solved the math problems, determined the combination to the safe, and revealed the prize:

After cracking equations, Chelsie typed in the code which opened to a box with a necklace engraved with the chemical formula for oxytocin.

The formula was a reference to their first meeting on the red carpet where she gave him a temporary tattooed of the same chemical.

'So you got to put some oxytocin on my chest, to my heart, and this way, the necklace, it'll be close, some oxytocin near your heart as well.

It's probably no surprise that the two ended up together in the aftermath of the show's suitably romantic final rose ceremony.

But more than just a triumph of math and love, the episode demonstrates assortative mating in action, where couples come together because of their shared potential in addition to their shared attraction. Which in the case of their shared potential, more often than not also reflects their shared earning potential.

But since most couples don't have the accelerated contrivances of a televised dating show to utilize during their courtships, how does that tend to work in the real world?

Alparslan Tuncay described how couples made up of individuals with similar earnings potential come often come together in a paper Marginal Revolution's Tyler Cowen described as "the best results on assortative mating and inequality I have seen". Here's the abstract:

This paper studies the evolution of assortative mating in the permanent wage (the individual-specific component of wage) in the U.S., its role in the increase in family wage inequality, and the factors behind this evolution. I first document a substantial trend in assortative mating, as measured by the permanent wage correlation of couples, from 0.3 for families formed in the late 1960s to 0.52 for families formed in the late 1980s. I show that this trend accounts for more than one-third of the increase in family wage inequality across these cohorts of families. I then argue that the increase in marriage age across these cohorts contributed to the assortative mating and thus to the rising inequality. Individuals face a large degree of uncertainty about their permanent wages early in their careers. If they marry early, as most individuals in the late 1960s did, this uncertainty leads to weak marital sorting along permanent wage. But when marriage is delayed, as in the late 1980s, the sorting becomes stronger due to the quick resolution of this uncertainty with work experience. After providing reduced-form evidence on the impact of marriage age, I build and estimate a marriage model with wage uncertainty and show that the increase in marriage age can explain almost 80% of the increase in assortative mating.

Marrying later contributes to this outcome because it allows the each partner in a couple the time to demonstrate their earning capacities, which would either reinforce the pairing or lead to a break up if the two are incompatibly mismatched. Tuncay's findings confirm what we've seen in the data for income inequality in the United States over that period of time, where there has been no change in the income inequality level for individuals, but a rising trend for both households and families as a greater emphasis on college education and early career establishment before marriage has become common in American society since the 1960s.

Assortative mating provides a very reasonable explanation for these outcomes. And we've just seen it at work, in all its oxytocin-enhanced sweetness, on The Bachelor Australia.

Labels: demographics, income inequality, math

New home prices rebounded in August 2019 according to the latest report from the U.S. Census Bureau on new residential sales.

Both median and average new home sale prices were up, with the initial figure for the average new home sale price reaching an all-time high of $404,200, beating December 2017's previous high value of $402,900. That new record high for average new home sale prices isn't yet official, as data for August 2019 will be revised several times in upcoming months before being finalized.

The following chart shows the Census Bureau's monthly data for average and median new home prices from January 2000 through August 2019's initial estimate.

The trends for both median and average new home sale prices are continuing to stabilize following what had been downward trends since late 2017. The following chart focuses just on the trailing twelve month average for median new home sale prices and their relationship with median household income, where we find that new home sale prices have mostly moved sideways after bottoming in March 2019, with falling mortgage rates stimulating the new home market.

Even though new home sale prices have begun to rebound, the continued growth of median household income means that the typical new home sold in the U.S. has been becoming relatively more affordable for the typical American household.

That outcome is evident in the following chart, where we've shown the ratio of the trailing twelve month averages of median new home sale prices to median household income. As of the preliminary data for August 2019, the median sale price of a new home in the U.S. has dropped below 5 times the median household income for the first time since December 2013. The ratio had previously peaked at 5.45 times median household income in February 2018.

Elsewhere, Bill McBride believes 2019 may become the best year for new home sales in the U.S. since 2007. We'll update our analysis of the market cap for the new home sales market sometime in upcoming weeks to see if he's right!

Labels: real estate

How much can you expect to spend on regular car maintenance over its lifetime?

That will most likely depend on what kind of car you drive and how many miles you drive it, but if you want a generic estimate for an average car driven in the U.S., you've come to the right place because we've built a tool to estimate the cost!

To do that, we're drawing on data that YourMechanic published in 2016 using data from the roving car maintenance service provider's "huge dataset". And then, we took inflation for car repairs into account to make the numbers current for 2019.

That all happens in the tool below, which if you're accessing this article on a site that republishes our RSS news feed, you'll likely want to click through to access a working version of the tool on our site. To run the tool, just enter mileage mark you want to consider, and we'll give you the average cumulative cost of maintenance up to that point based on YourMechanic's inflation adjusted numbers.

Overall, the tool is optimized to estimate average regular maintenance costs between 0 and 200,000 miles.

If you want to estimate the cost of maintenance between two mileage thresholds, try running the tool with the higher mileage first, then with the lower mileage, the result for which you'll need to subtract from your previous result. If you choose 25,000 mile intervals, the results will be similar to the data YourMechanic provided in their "How Do Maintenance Costs Vary With Mileage?" table, although after adjusting for three years of inflation.

Now, how might you use this information to determine when it would be time to replace your car? Would it be when the cumulative cost exceeds the cost of a new car? Or would it when the cost of maintenance between a certain mileage interval exceeds the resale value of your car?

Labels: personal finance, tool

We often use the phrase "Lévy flight events" to describe the outsized movements of stock prices, but we've never really addressed the reason why we use that terminology!

Let's start by looking at the day-to-day volatility of stock prices for the S&P 500 (Index: SPX) since 3 January 1950. In the following chart, we've shown that volatility as the percentage change from the previous trading day's closing value for the index, where we've also presented the mean and standard deviation of that variation all the way through the close of trading on Friday, 20 September 2019.

If the volatility of stock prices followed a normal Gaussian distribution, we would expect that:

- 68.3% of all observations would fall within one standard deviation of the mean trend line

- 95.5% of all observations would fall within two standard deviations of the mean trend line

- 99.7% of all observations would fall within three standard deviations of the mean trend line

But that's not what we see with the S&P 500's data, is it? For our 17,543 daily observations, we instead find:

- 78.7% of all observations fall within one standard deviation of the mean trend line

- 95.3% of all observations fall within two standard deviations of the mean trend line

- 98.6% of all observations fall within three standard deviations of the mean trend line

Already, you can see that the day-to-day variation in stock prices isn't normal, or rather, is not well described by normal Gaussian distribution. While there are about as many observations between two and three standard deviations of the mean as we would expect in that scenario, there are way more observations within just one standard deviation of the mean than we would ever expect if stock prices were really normally distributed.

Additional discrepancies also show up the farther away from the mean you get. There are more outsized changes than if a normal distribution applied, which is to say that the real world distribution of stock price volatility has fatter tails than would be expected in such a Gaussian distribution.

There are other kinds of stable distributions that also have a central tendency of for variation in data to appear near the mean that do a better job of describing the variation in stock prices. One of these was developed by French mathematician Paul Lévy and is now known as the Lévy distribution.

The Lévy distribution's applicability for describing the variation of stock prices was recently validated in a paper posted at arXiv by Takumi Fukunaga and Ken Umeno, who found that the Lévy distribution does a better job than the normal Gaussian distribution for the S&P 500, the Nikkei 225, the Dow 30, and the Shanghai Stock Exchange (SSE) indices. Figure 1 from the paper compares the probability density functions of the standardized raw data, the Lévy’s stable distribution with estimated parameters (α, β), and the Gaussian distribution, while Table 1 gives the Lévy’s stable distribution's parameters for each stock market index:

For all four stock market indices, the Lévy distribution outperforms the normal Gaussian distribution in describing the variation of stock prices. What's more, Fukunaga and Umeno find all four indices share very similar parameter values for their Lévy distributions in their period of interest from 2 January 1975 to their arbitrary cutoff date of 31 May 2017.

In terms of the generalized central limit theorem, Lévy’s stable distribution is theoretically more suitable than the Gaussian distribution for fitting the log-returns of the stock markets. The stock prices with power-law tails would not converge to the Gaussian distribution, since the classical central limit theorem cannot be applied in this case.

The parameters (α, β) show a similar value regardless of the stock index. The stability parameter α of all the stock indices were around α = 1.6 which seems to be universal, and lower than the Gaussian distribution corresponding to α = 2. As β has a negative value, it is shown that the stock market has a skewness. Then, the parameters fluctuate by dividing the analyzing time-windows. There is a correlation between the price and (α, β), especially when the financial crisis occurred.

Overall, we see that while there still more observations concentrated around the means for each index than would be expected by either the normal Gaussian distribution or the Lévy’s stable distribution, the Lévy distribution comes much closer to accounting for that greater concentration than the Gaussian distribution does, while more accurately reflecting the frequency of large, outsized changes in stock prices.

And that's why we use the phrase "Lévy flight events" whenever we discuss days where stock prices changed by very large percentages from the previous day's closing value.

Labels: chaos, ideas, math, SP 500, stock market, stock prices

The S&P 500 (Index: SPX) didn't implode during the third week of September 2019, as the results of the drone and missile attacks on Saudi Arabia's oil fields proved to be less disruptive to global oil supplies than initially feared. At the same time, the potential for an immediate escalation in the conflicts between Saudi and Iranian interests in the Middle East was avoided, which allowed oil prices to return to lower, but still elevated levels after spiking sharply upward on Monday, 16 September 2019 in the initial response to the attacks.

And then, there was the Fed. The Fed acted to cut short term interest rates in the U.S. by another quarter point on Wednesday, 18 September 2019, which prompted a negative spike in the U.S. stock market immediately after the announcement, which then quickly dissipated after top Fed officials hinted at the return of its quantitative easing programs in upcoming months.

So while there was more than one major fireworks show that could churn up stock prices during the week, the potential effect on the S&P 500 was largely suppressed. If it weren't for Friday, 20 September 2019 being a quad witching day, marking the end of multiple futures contracts and raising the probability of volatility from the all the associated trading activity, the overall change in the S&P 500 would have been suppressed to be within a point of where it closed the previous week. With the quad witching day however, the S&P 500 still ended the third week of September 2019 just 15.32 points lower than it closed the previous week.

Consequently, investors maintained their focus on the distant future quarter of 2020-Q1 in setting stock prices throughout the week, where stock prices ended the week just about exactly where our dividend futures-based model would put them with that particular focal point:

Sharp-eyed, regular readers will also recognize that we've removed the tan-shaded areas we added shortly after the 31 July 2019 - 5 August 2019 Lévy flight event, which began after the Fed shook investor expectations for future interest rate cuts.

That's because the model has continued to work well in anticipating future stock prices without adjustment, where the Fed's meeting this week provided us with the opportunity we needed to confirm its calibration. The trajectory of stock prices during this past week has proven to be well within the range of values we would expect if investors were closely focused on 2020-Q1, so the model is performing well in the current environment, as the flow of new information helps explain why they have kept their attention on that future quarter.

Speaking of the flow of new information, here are the headlines we tagged during the week that was for their market-moving potential:

- Monday, 16 September 2019

- Oil jumps nearly 15% in record trading after attack on Saudi facilities

- Explainer: How the Saudi attack affects global oil supply

- Evidence indicates Iranian arms used in Saudi attack, Riyadh says

- Iran's Rouhani says Aramco attacks were a reciprocal response by Yemen

- Trump authorizes release of U.S. oil reserves if needed because of Saudi attacks

- Bigger trouble developing in China:

- China's slowdown deepens; industrial output growth falls to 17-1/2 year low

- 'Very difficult' for China's economy to grow 6% or faster: Premier Li

- Bright spot? China's property investment growth at four-month high in August

- Wall Street drops after Saudi attacks, energy stocks spike

- Tuesday, 17 September 2019

- Oil plummets 6% as Saudi minister says supplies fully restored

- Exclusive: Saudi oil output to return faster than first thought - sources

- U.S. believes attack on Saudi Arabia came from southwest Iran

- Bigger trouble developing in China:

- China August factory deflation deepens, prices fall most in three years; pork prices soar

- China's home price growth at weakest in nearly a year, developers seen cutting prices

- U.S. manufacturing production rebounds, outlook remains weak

- Trump says U.S. reaches trade deals with Japan, no vote needed

- Fed rate cut a coin toss, futures imply

- Divided Fed set to cut interest rates this week, but then what?

- U.S. effective fed funds rate jumps on Monday: New York Fed data

- Wall Street rises as oil fears recede, market awaits Fed

- Wednesday, 18 September 2019

- Oil prices extend losses after Saudi pledge to restore lost output

- Saudi oil attacks came from southwest Iran, U.S. official says, raising tensions

- Trump says he has ordered substantial increase of Iran sanctions

- U.S. fed funds rate breaks above Fed's target range

- Explainer: The Fed has a repo problem. What's that?

- Analyst view: Fed's day two of cash injection into U.S. banking system

- Fed might end reserve run-off, ramp up bond purchases: analysts

- Fed cuts interest rates, signals holding pattern for now

- S&P 500 ends slightly higher after Fed gives mixed signals

- Thursday, 19 September 2019

- Brent rises 1% as Saudi supply risks come into focus

- Bigger trouble developing

in Chinaall over: - China's growth could slip below 6%, analysts warn, as trade war takes toll

- ECB's stimulus weapon has weak start

- OECD cuts growth outlook to post-crisis low

- Trump adviser says U.S. president ready to escalate trade war if no deal agreed soon: SCMP

- Wall Street mixed as Microsoft climbs and Apple dips

- Friday, 20 September 2019

- Oil slips on trade fears but soars in week after Saudi production attacked

- Fed in three voices: recession, bubbles, and 'in a good place'

- Fed's Bullard, explaining dissent, says U.S. manufacturing appears 'in recession'

- Fed's Rosengren says interest rate cuts are 'not costless'

- Fed's Rosengren: Will worry when consumers show worry

- Interesting sidebar: Fed's Rosengren flags risks to economy in WeWork-style model

- Fed's Kaplan says he penciled in no further rate cuts in 2019

- Fed's Clarida hails strong U.S. consumer but says risks remain

- Wall Street drops after China cancels trip to Montana farmland

Need a bigger picture on what happened in the third week of September 2019? Barry Ritholtz has you covered, providing a list of 6 positives and 6 negatives he found in the week's economics and market-related news.

The easy question for the weeks ahead is: "what will happen to stock prices if investors shift their attention to another point of time in the future?" The answer to that is a simple problem in quantum kinematics. The big money question is: "how long will investors be compelled to hold their attention on 2020-Q1 before they shift it to a different point of time in the future?"

And regardless of how you might answer that question, how would you set up your investing strategy to take advantage of any such shift when it might develop into an opportunity?

Pi is an irrational number, which is to say that it is a real number than cannot be precisely written as the ratio of two integers in a simple fraction.

That's not to say that you cannot reasonably approximate the value of pi with such a fraction however - it's just a question of how much error you're willing to live with by doing so. If the results of your math would be okay if you only approximated pi to just two decimal places, which in decimal form is 3.14, you could substitute the rational fraction 22/7. If you needed up to six decimal places of rounded-up precision, 3.141593, you could use the relatively much easier-to-remember fraction 355/113 instead.

But how well can any irrational number like pi, e, phi, or √2 be approximated with a simple fraction with an integer numerator and denominator?

That has been an open question since 1941, when Richard Duffin and Albert Schaeffer conjectured that for whatever level of error you're willing to live with in your rational approximation of an irrational number, you can either find a nearly infinite number of possible fractions or almost none. Here's how Quanta Magazine's Kevin Hartnett described the conjecture:

The Duffin-Schaeffer conjecture is an attempt to provide the most general possible framework for thinking about rational approximation. In 1941 the mathematicians R.J. Duffin and A.C. Schaeffer imagined the following scenario. First, choose an infinitely long list of denominators. This could be anything you want: all odd numbers, all numbers that are multiples of 10, or the infinite list of prime numbers.

Second, for each of the numbers in your list, choose how closely you’d like to approximate an irrational number. Intuition tells you that if you give yourself very generous error allowances, you’re more likely to be able to pull off the approximation. If you give yourself less leeway, it will be harder....

Now, given the parameters you’ve set up — the numbers in your sequence and the defined error terms — you want to know: Can I find infinitely many fractions that approximate all irrational numbers?

The conjecture provides a mathematical function to evaluate this question. Your parameters go in as inputs. Its outcome could go one of two ways. Duffin and Schaeffer conjectured that those two outcomes correspond exactly to whether your sequence can approximate virtually all irrational numbers with the demanded precision, or virtually none. (It’s “virtually” all or none because for any set of denominators, there will always be a negligible number of outlier irrational numbers that can or can’t be well approximated.)

In what may be the biggest math story of 2019, the Duffin-Schaeffer conjecture may have been proven this past summer. Dimitris Koukoulopoulos and James Maynard posted a preprint of their paper confirming that choosing smaller 'acceptable' error ranges makes it harder to approximate irrational numbers with simple fractions, which would be a remarkable advance in the field of number theory.

Scientific American's Leila Sloman describes their approach:

Maynard and Koukoulopoulos knew that previous work in the field had reduced the problem to one about the prime factors of the denominators—the prime numbers that, when multiplied together, yield the denominator. Maynard suggested thinking about the problem as shading in numbers: “Imagine, on the number line, coloring in all the numbers close to fractions with denominator 100.” The Duffin-Schaeffer conjecture says if the errors are large enough and one does this for every possible denominator, almost every number will be colored in infinitely many times.

For any particular denominator, only part of the number line will be colored in. If mathematicians could show that for each denominator, sufficiently different areas were colored, they would ensure almost every number was colored. If they could also prove those sections were overlapping, they could conclude that happened many times. One way of capturing this idea of different-but-overlapping areas is to prove the regions colored by different denominators had nothing to do with one another—they were independent.

But this is not actually true, especially if two denominators share many prime factors. For example, the possible denominators 10 and 100 share factors 2 and 5—and the numbers that can be approximated by fractions of the form n/10 exhibit frustrating overlaps with those that can be approximated by fractions n/100.

Maynard and Koukoulopoulos solved this conundrum by reframing the problem in terms of networks that mathematicians call graphs—a bunch of dots, with some connected by lines (called edges). The dots in their graphs represented possible denominators that the researchers wanted to use for the approximating fraction, and two dots were connected by an edge if they had many prime factors in common. The graphs had a lot of edges precisely in cases where the allowed denominators had unwanted dependencies.

Using graphs allowed the two mathematicians to visualize the problem in a new way. “One of the biggest insights you need is to forget all the unimportant parts of the problem and to just home in on the one or two factors that make [it] very special,” says Maynard. Using graphs, he says, “not only lets you prove the result, but it’s really telling you something structural about what’s going on in the problem.” Maynard and Koukoulopoulos deduced that graphs with many edges corresponded to a particular, highly structured mathematical situation that they could analyze separately.

The graphs they develop to map the greatest common divisors for the rational approximations of irrational numbers are bipartite graphs. The following video provides a short introduction:

The Koukoulopoulos-Maynard proof of the Duffin-Schaeffer conjecture is now in the process of being validated. If determined to be valid, the proof may have an immediate impact on the related field of p-Adic approximation, which would have applications in quantum mechanics and field theory, as well as resolving other conjectures in number theory that rely on the Duffin-Schaeffer conjecture being true.

Update: A very timely xkcd cartoon from How To author Randall Munroe!

Image Credit: Stack Overflow

Labels: math

As expected, the Federal Reserve acted to cut short interest rates in the United States on 18 September 2019, reducing its target range for the Federal Funds Rate from 2.00%-2.25% down to 1.75%-2.00%.

That cut comes as the odds of a national recession starting in the U.S. sometime during the next twelve months, as might be determined at a later date by the National Bureau of Economic Research, has continued to drift higher even as the Fed has begun cutting interest rates, with just over a 11% probability according to the methodology laid out by the Federal Reserve Board's Jonathan Wright in a 2006 paper. This quarter point reduction was the second quarter point cut following the cut announced on 31 July 2019 at the conclusion of the Federal Open Market Committee's previous meeting.

Stock prices quickly dropped by nearly one percent from the previous day's closing value shortly after the announcement, as investors were looking for signs the Fed would start implementing quantitative easing in addition to the rate cut. That disappointment didn't last long, because Fed Chair Jerome Powell's comments during the following press conference hinted the Fed would be likely to start new rounds of quantitative easing in the near future when he said that "it is certainly possible that we'll need to resume the organic growth of the balance sheet sooner than we thought."

That prospect sent bank stocks up sharply and led the S&P 500 (Index: SPX) to close slightly higher for the day. The question now is whether quantitative easing will become a virtually permanent feature of the Fed's open market operations.

Updating the Recession Probability Track, we find that the rate at which the probability of recession is rising using Wright's methods is indeed beginning to slow, with just over a one-in-nine chance of a recession beginning in the U.S. before October 2020.

The Recession Probability Track is based on Jonathan Wright's recession forecasting method using the level of the effective Federal Funds Rate and the spread between the yields of the 10-Year and 3-Month Constant Maturity U.S. Treasuries. If you would like to run your own recession probability scenarios, as we recently did after factoring in all the quantitative tightening the Fed achieved prior to its policy reversal in July 2019, please take advantage of our recession odds reckoning tool.

It's really easy. Plug in the most recent data available, or the data that would apply for a future scenario that you would like to consider, and compare the result you get in our tool with what we've shown in the most recent chart we've presented above.

If you would like to catch up on any of the analysis we've previously presented, here are all the links going back to when we restarted this series back in June 2017.

Previously on Political Calculations

- The Return of the Recession Probability Track

- U.S. Recession Probability Low After Fed's July 2017 Meeting

- U.S. Recession Probability Ticks Slightly Up After Fed Does Nothing

- Déjà Vu All Over Again for U.S. Recession Probability

- Recession Probability Ticks Slightly Up as Fed Hikes

- U.S. Recession Risk Minimal (January 2018)

- U.S. Recession Probability Risk Still Minimal

- U.S. Recession Odds Tick Slightly Upward, Remain Very Low

- The Fed Meets, Nothing Happens, Recession Risk Stays Minimal

- Fed Raises Rates, Recession Risk to Rise in Response

- 1 in 91 Chance of U.S. Recession Starting Before August 2019

- 1 in 63 Chance of U.S. Recession Starting Before September 2019

- 1 in 54 Chance of U.S. Recession Starting Before November 2019

- 1 in 42 Chance of U.S. Recession Starting Before December 2019

- 1 in 26 Chance of U.S. Recession Starting Before February 2020

- 1 in 16 Chance of U.S. Recession Starting Before April 2020

- 1 in 14 Chance of U.S. Recession Starting Before April 2020

- 1 in 13 Chance of U.S. Recession Starting Before May 2020

- 1 in 12 Chance of U.S. Recession Starting Before June 2020

- 1 in 11 Chance of U.S. Recession Starting Before July 2020

- Odds of U.S. Recession Before August 2020 Rise to 1 in 10

- 1 in 10 Chance of U.S. Recession Starting Before August 2020

- What The Dickens Is Going On With Recession Indicators?

Labels: recession forecast

African swine fever (ASF) is devastating China's domestic hog herd. Starting with an estimated population of 460 million hogs, China has lost at least 100 million hogs, about 21% of the country's total herd, to the infectious and incurable disease, and is expected to see further losses of at least another 10% in the next year. Rabobank projects the losses could reach 50% of the county's overall pig herd before the disease has run its course.

As you might imagine, the growing shortage of domestically produced pork in China has affected prices for the meat, which we're featuring in the following chart, comparing China's prices with U.S. hog prices per hundredweight over the past year.

Our sources for the data are below, where we recognize we may not have a proper apples-to-apples comparison between the two countries' hog prices. The U.S. hog prices are for "national base 51-52% lean" barrows and gilts, while the Chinese prices are simply given in yuan per kilogram, which we converted to U.S. dollars per 100 pounds. There may be a conversion factor that needs to be incorporated to make them truly equivalent, where our estimates for the Chinese prices would be off by a consistent scale factor.

As such, the more important factor to consider is the trend in China's prices, which have spiked upward in recent months after having largely paralleled U.S. prices through May 2019. It also appears that Chinese prices may lead U.S. prices, which we'll continue monitoring in upcoming months to see if the spike in Chinese pork prices comes to be reflected in U.S. prices. With China exempting U.S. hogs from their trade war tariffs during the past week to help alleviate the shortages the country is facing, the resulting increase in quantity demanded for U.S.-produced pork should put upward pressure on U.S. prices in the near term, assuming no other changes in the supply and demand for U.S.-produced pork.

In theory, anyway. We'll see what happens in practice.

References

U.S. Department of Agriculture. Livestock Prices (Hog Prices). [Excel spreadsheet]. Accessed 17 September 2019.

Pig333. Pig Prices in China (CNY/kg). [Online Database]. Accessed 17 September 2019.

Federal Reserve. China/U.S. Foreign Exchange Rate. [Online Database]. Accessed 17 September 2019.

Metric Conversions. Weight Conversion. [Online Application]. Accessed 17 September 2019.

Previously on Political Calculations

Labels: food

Nationally, the total valuation of aggregate existing home sales have continued to dip in June 2019 from April 2019's level. All but the U.S. Census Bureau's Northeast region has seen dips from recent peaks.

Preliminary and revised state level existing home sales data through June 2019 is now available from Zillow's databases, which now includes data from New Hampshire in the period July 2017 to the present. The following chart illustrates the trends we see for the 44 states for which Zillow provides seasonally-adjusted sale prices and volumes for existing homes in 44 states and the District of Columbia.

The following charts break the national aggregate existing home sales totals down by U.S. Census Bureau major region from January 2016 through June 2019. The first two charts below show the trends for the West and the Northeast, which have respectively been the weakest and strongest regions in the nation over the last several months. [Please click on the individual charts to see larger versions.]

Meanwhile, the U.S. South and Midwest regions have seen relatively flat levels of aggregate existing home sales since early 2018, although recently revised data indicates that aggregate sales in the Midwest reached a peak in April 2019. In the months since, preliminary data indicates that both regions have seen a softening in existing home sales.

Of all these regions, the West has shown the most weakness, with California's market accounting for the lion's share of that weakness since March 2018.

Aside from California, which is the 800-pound gorilla of state-level real estate markets, Washington, Colorado, Utah, and Oregon have also seen declines in recent months, with other states' markets appearing relatively flat. Only Arizona stands out with a rising volume of existing home sales in the last several months.

Labels: real estate

After all the fireworks of volatility over the last several weeks, the second week of trading in September 2019 saw precious little for the S&P 500 (Index: SPX), which closed up for the entire week by less than 1% from the previous week's close.

All the action during the week was fully consistent with investors being closely focused on 2020-Q1 in setting current day stock prices, as suggested by our alternate futures spaghetti chart.

The reason for that was an outbreak of relatively good news, which has reduced the odds of future Fed rate cuts in upcoming months. As of the close of trading on Friday, 13 September 2019, the CME Group's FedWatch Tool is projecting quarter point rate cuts at the conclusion of the Fed's upcoming meetings this week, and again in December 2019. But whether there will be another in 2020-Q1 has become an open question, which is why investors would continue to be focusing on that particular distant future quarter:

We said there was an outbreak of good news, and we meant it! Here are the headlines that caught our attention during the less-than-volatile week that was:

- Monday, 9 September 2019

- Oil gets boost as new Saudi minister commits to output cuts

- Bigger stimulus developing

in Chinaall over: - China to further support private firms, boost manufacturing - state media

- China's August new loans seen up slightly, more easing expected: Reuters poll

- Time for shock and awe: Five questions for the ECB

- Wall Street ends flat amid rate hopes, tech declines

- Tuesday, 10 September 2019

- Oil falls on possibility of Iran exports resuming after Trump fires hard-line adviser

- Bigger trouble developing

in Chinaall over: - China August factory deflation deepens, prices fall most in three years; pork prices soar

- Australia business conditions deteriorate again in August: survey

- Exclusive: Waning confidence over global recovery may nudge BOJ closer to easing - sources

- Inverted yield curves 'not a vote of confidence': BoE's Carney

- Wall Street mixed as investors flee growth for value

- Wednesday, 11 September 2019

- Oil prices slide 2% after report Trump weighed easing Iran sanctions

- Bigger trouble developing

in Chinaall over: - China's auto sales face more bumps ahead, industry body warns, after latest slump

- German institutes see recession, cut growth forecasts for 2019, 2020

- Bigger stimulus developing

in Chinaall over: - China bank loans up in August, more stimulus expected

- Chile could cut rates but sub-zero, QE talk premature: central bank governor

- Trump reverses course, seeks negative rates from Fed 'boneheads'

- Trade hopes buoy Wall Street as China extends olive branch

- Thursday, 12 September 2019

- Oil prices fall 1% on U.S.-China trade doubts, OPEC+ talks

- Bigger trouble developing all over:

- Bigger stimulus developing all over:

- ECB cuts key rate, to restart bond purchases

- Draghi ties Lagarde's hands with promise of indefinite stimulus

- Draghi's parting shot leaves next ECB boss with existential dilemma

- Explainer: How does negative interest rates policy work?

- Denmark's central bank cuts key interest rate to historic low

- BOJ considering ways to deepen negative rates at minimal cost: sources

- Chinese and U.S. officials will meet next week to discuss trade: Xinhua

- Exclusive: Ahead of trade talks, China makes biggest U.S. soybean purchases since June - traders

- China to exempt U.S. pork, soybeans from additional tariffs: Xinhua

- Trump: would consider interim trade deal with China

- Wall Street ends higher on trade, ECB stimulus hopes

- Friday, 13 September 2019

Elsewhere, Barry Ritholtz listed six positives and six negatives he found in the week's economics and market-related news over at the Big Picture.

Given overseas events, we're afraid the upcoming week will be quite different.

Welcome to the blogosphere's toolchest! Here, unlike other blogs dedicated to analyzing current events, we create easy-to-use, simple tools to do the math related to them so you can get in on the action too! If you would like to learn more about these tools, or if you would like to contribute ideas to develop for this blog, please e-mail us at:

ironman at politicalcalculations

Thanks in advance!

Closing values for previous trading day.

This site is primarily powered by:

CSS Validation

RSS Site Feed

JavaScript

The tools on this site are built using JavaScript. If you would like to learn more, one of the best free resources on the web is available at W3Schools.com.