We're closing 2018 out in high style with our annual celebration of the biggest math stories of the year, where by the end of this post, we'll have identified what we believe is the biggest math story of the year!

One thing we're introducing this year are the embedded videos that provide background information for some of the more complex topics that highlighted the year's math-related stories. If you're accessing this article on a site that republishes our RSS news feed, they will likely be featured *very* prominently, but if you click through to the original version of this article that appears on our site, you will find them better integrated into the article's overall visual format.

That said, let's begin this year's edition with breaking news, where 2018 has seen the confirmation of a new prime number, one that requires 24,862,048 digits to fully write out, but is much more easily written as an equation (282,589,933 - 1) and is the 51st member of the Mersenne family of prime numbers.

It was first identified on 7 December 2018 as part of the Great Internet Mersenne Prime Search (GIMPS), which allows math enthusiasts to participate in the hunt for prime numbers by downloading free software to run on their personal computing systems that coordinates and distributes the work of searching for Mersenne primes among volunteers. In this case, the world's new largest known prime number was identified on Florida information technology professional Patrick Laroche's home computer, confirmed, then announced on 21 December 2018.

This discovery captures many of the elements that define the biggest math story of the year, which is why we've chosen to lead this year's edition with this particular tale. Overall, the year featured a lot of convolutions in the field of mathematics with some very notable mathematicians making news for their work, but there is an underlying theme connecting several of the stories that appeared throughout the year that, in combination, produces the biggest maths story of 2018.

Having made that introduction, let's continue summing up the year's bigger math stories....

As mathematical propositions go, the famed ABC Conjecture from number theory has truly unique beginnings, having reportedly been concocted at a cocktail party by its originators, mathematicians Joseph Oesterlé and David Masser, and subsequently published in 1980 where it has tormented professional mathematicians ever since. Like many other seemingly simple propositions that arise at the intersection of alcohol consumption and math, it has turned out to be devilishly difficult to prove, where the most promising attempt to put forward a valid proof of the conjecture to date, by Shinichi Mochizuki, is so complex that it has been considered to be almost impenetrable, and therefore unconfirmable, by other mathematicians since it was published in 2012.

That state of affairs lasted until 2018, when Peter Scholze and Jakob Stix identified what appears to be a fatal flaw in a portion of Mochizuki's work, invalidating the proof.

For mathematicians, the discovery of an error in a proof is an important step forward because the negative result closes off a blind alley that might detour mathematicians away from producing a valid proof of the conjecture and can redirect them onto an approach that succeeds in getting to a valid proof. That most famously happened when an error was found in a portion of Andrew Wiles' initial proof of Fermat's Last Theorem, that ultimately led to its correction and subsequent official confirmation.

The biggest math story of 2017 revolved around the disproof of part of the Navier-Stokes equation, so this kind of thing is a very big deal. But it's not the biggest math story of 2018!

Perhaps the biggest publicity splash in the mathematical world of 2018 came in late September, when the distinguished mathematician Michael Atiyah announced that he had found a proof for the Riemann Hypothesis while developing a mathematical foundation for the Fine Structure Constant. If Atiyah's claim holds, it would perhaps be the biggest twofer ever in the fields of mathematics and physics.

For our money, the best live reporting of the Atiyah's presentation came from Markus Pössel's twitter feed, which features some wonderfully dry humor at its very best.

Like Mochizuki's proof of the ABC Conjecture before it however, Atiyah's potential million dollar prize-winning proof of the Riemann Hypothesis has mathematicians viewing the claim with caution, where the presented proof depends upon a number of concepts that are not well established among mathematicians and is thus being treated with appropriate skepticism.

How uncertain are mathematicians about Atiyah's proof? It hinges on Atiyah's development of the Todd function, which he named after one of his mentors, J.A. Todd, and has described as "a very very clever function that maps Euler’s equation to its quaternionic generalization, and is defined by an infinite iteration of exponentials". [Note: we've added the links in the quote to provide additional background, but they don't go very far in explaining exactly what it is.]

In the final measure, that all makes for a big a math story, but alas, in the absence of a clear confirmation of the proof, not the biggest....

One story that we followed with interest in 2018 involved the use of math to detect partisan gerrymandering in drawing election districts in the U.S., which promised to provide a lot of fireworks because the U.S. Supreme Court was slated to hear two cases where that math would be front and center in confronting how the boundaries of voting districts were drawn in Wisconsin and in Maryland to favor the political party in each state that controlled the process of remapping their boundaries.

The legal cases fizzled out however when the Supreme Court unanimously ruled in the Wisconsin case that the plaintiffs had failed to demonstrate that they had been directly injured by the practice, and in the Maryland case, the justices unanimously ruled that the plaintiffs had waited too long after the redistricting to sue for relief.

Consequently, there has not yet been any case where the findings of the math developed to detect political gerrymandering has been argued at the Supreme Court. That might change in 2019 where two other cases are currently lurking in the country's lower courts, but it is perhaps unlikely that the justices would consider disrupting the upcoming 2020 election cycle with any non-status quo rulings, especially when that year will see the next decennial U.S. Census that will lead to nearly all legislative districts being redrawn across the United States anyway.

Our next top math story for 2018 lies at the intersection between computer science and quantum physics.

Quantum computing is an up-and-coming technological field that utilizes the extraordinary properties of subatomic-size matter to perform massive numbers of calculations that can theoretically outstrip the computational performance of today's fastest supercomputers. The technology and the math that enables this increased capability has the potential to deliver exponential improvements to computing technology.

Part of its promise is that quantum computers will be capable of performing calculations that conventional computers cannot do, which leads to a very serious technical challenge in establishing the validity of any results that might be obtained.

How can you check that a quantum computer is running its code properly and doing what it is supposed to be doing?

If you distrust an ordinary computer, you can, in theory, scrutinize every step of its computations for yourself. But quantum systems are fundamentally resistant to this kind of checking. For one thing, their inner workings are incredibly complex: Writing down a description of the internal state of a computer with just a few hundred quantum bits (or “qubits”) would require a hard drive larger than the entire visible universe.

And even if you somehow had enough space to write down this description, there would be no way to get at it.

That problem may have been solved by a grad student at the University of California-Berkeley. Urmila Mahadev has been working on answering this basic question over the last eight years, and has developed an algorithmic solution:

She has come up with an interactive protocol by which users with no quantum powers of their own can nevertheless employ cryptography to put a harness on a quantum computer and drive it wherever they want, with the certainty that the quantum computer is following their orders.

That's the kind of practical achievement in the application of math that we seek to highlight, and it's a major step forward in an emerging field with unique challenges that has just been written a very big check by the U.S. government.

Our next story begins with the 14-episode long first season of an anime series, The Melancholy of Haruhi Suzumiya, which has baffled its fans ever since it originally aired on Japanese television because the episodes, which feature time travel, have never, ever been presented in any kind of consistent or linearly chronological order in any of its various releases.

Back in 2011, several of the series' fans were arguing on the 4chan social media site what would be the best order in which to watch the episodes, which naturally led to a bigger mathematical question: what is the lowest number of episode combinations that a viewer might follow in order to watch the episodes in every possible order? That question led one anonymous 4chan poster to present a method (republished here) that described a method for how to find the answer to that mathematical question.

Flash forward several years later, when science fiction author Greg Egan (notably the author of 1994's Permutation City) worked out a proof that determined the largest number of permutations in which a number of unique symbols (or items, or episodes, etc.) could be grouped into a unique order, or rather, a superpermutation.

The two proofs together represent the lower and upper bound solutions for what has come to be known as "The Hiruhi Problem", which mathematicians Robin Houston, Jay Pantone, and Vince Vatter have now developed into a formal proof, where the lead author is identified as "Anonymous 4chan Poster". The proofs are useful beyond identifying all the possible ways the episodes of a television series might be watched because they can be applied to solving the asymmetric traveling salesman problem, where it can determine the minimum number of potential combinations that need to be considered to find an optimal solution.

This story makes the cut as one of the biggest math stories in 2018 because it demonstrates that like good anime, good math is wherever you find it - it doesn't require dedicated teams of professional mathematicians to come about. It is also very timely because Black Mirror fans may soon be trying to solve their own version of the Haruhi problem when Black Mirror: Bandersnatch premieres....

Many mathematical questions are often deceptively simple. Consider this one: "What is the shape with the smallest area that can completely cover a host of other shapes (which all share a certain trait in common)?"

That question was first asked by French mathematician Henri-Léon Lebesgue in 1914. Since then, the problem has largely stymied the mathematicians who attempted to solve it because anytime a possible universal covering shape was found, the question of whether it was the smallest one possible remained. It was also something of a niche problem, where many mathematicians simply preferred to pursue solutions for other problems that held greater interest for them.

Roughly 99 years later, the unsolved problem was the subject of a blog post by University of California-Riverside math professor John Baez. That blog post was read by a former software engineer, Philip Gibbs, who was looking for an intellectual challenge to take on in his retirement.

Gibbs, who had an undergraduate degree in mathematics and a PhD in physics, thought the problem lent itself to an iterative approach that fit his programming background, which led to the first major progress in addressing the question in decades:

In 2014 Gibbs ran computer simulations on 200 randomly generated shapes with diameter 1. Those simulations suggested he might be able to trim some area around the top corner of the previous smallest cover. He turned that lead into a proof that the new cover worked for all possible diameter-1 shapes. Gibbs sent the proof to Baez, who worked with one of his undergraduate students, Karine Bagdasaryan, to help Gibbs revise the proof into a more formal mathematical style.

The three of them posted the paper online in February 2015. It reduced the area of the smallest universal covering from 0.8441377 to 0.8441153 units. The savings — just 0.0000224 units — was almost one million times larger than the savings that Hansen had found in 1992.

Gibbs was confident he could do better. In a paper posted online in October, he lopped another relatively gargantuan slice from the universal cover, bringing its area down to 0.84409359 units.

His strategy was to shift all diameter-1 shapes into a corner of the universal cover he’d found a few years earlier, then remove any remaining area in the opposite corner. Accurately measuring the area savings, however, proved exacting. The techniques Gibbs used are all from Euclidean geometry, but he had to execute with a precision that would make any high school student cross-eyed.

“As far as the math goes, it’s just high-school geometry. But it’s carried to a fanatical level of intensity,” wrote Baez.

To identify another common factor shared among several of the stories we've presented in this year's edition of the biggest math story of 2018, if you look at the end of Gibbs' October 2018 paper, you'll find Greg Egan's name referenced in the acknowledgments!

That last unique commonality brings us to what we believe is the biggest math story of 2018: the unusually large influence of amateurs and enthusiasts in advancing knowledge across multiple mathematical fields, which extends beyond the limited selection of stories we chose to highlight in this year's edition.

In considering what other factors are shared among the stories involving the discoveries made by amateur mathematicians in 2018, we find the widespread availability of computing technologies and the relatively recent establishment of very specialized networking connections between amateurs and professionals as significant changes from what we've seen in previous years. These are stories that we would not have seen five years ago when we began our tradition of celebrating the biggest math story of the year, and that change over time is why the growing capabilities and achievements of amateur mathematicians is the biggest math story of 2018.

Before we conclude this year's edition, let's share one more video, this time, featuring the magic of Möbius Kaleidocycles....

References and Miscellaneous Credits

- Baez, John Carlos. Lebesgue's Universal Covering, Illustration of Brass' and Sharifi's solution with three "covered" geometric shapes (we animated the original diagram to emphasize the three geometric shapes being "universally" covered by the solution).

- U.S. Geological Survey National Map: Congressional Districts (113th U.S. Congress), Public Domain.

Previously on Political Calculations

The Biggest Math Story of the Year is how we've traditionally marked the end of our posting year since 2014. Here are links to our previous editions, along with our coverage of other math stories during 2018:

- The Biggest Math Story of the Year (2014)

- The Biggest Math Story of 2015

- The Biggest Math Story of 2016

- The Biggest Math Story of 2017

- The Biggest Math Story of 2018

- The Music in Math Equations

- The Social Network of the Battle of Clontarf

- Math to Detect Partisan Gerrymandering

- Do the Quad Solve!

- Peeling Back the Layers of Octonions to Reveal the Laws of Physics

- Ranking the Worst-Ever One-Day Stock Market Cap Crashes

- How Big Is That Fire?

- The Past and Future North American Megadrought

- The Distortion of Everyday Maps

- The Math and Physics of Breaking Spaghetti

- A Universal Pattern in Math, Biology and Physics

- The Rent Is Too Damn High!

- The Constants in the Fine Structure Constant

- Solving the Topology of Poverty

- Shopping for the Biggest Ideas in Math

- Telescoping Median Household Income Back in Time

This is Political Calculations final post for 2018. Thank you for reading us this year, have a Merry Christmas and a wonderful holiday season, and we'll see you again in the New Year!

Labels: math

On Monday, we announced that we were wrapping up "our S&P 500 Chaos series until 2019, unless market events prompt a special edition."

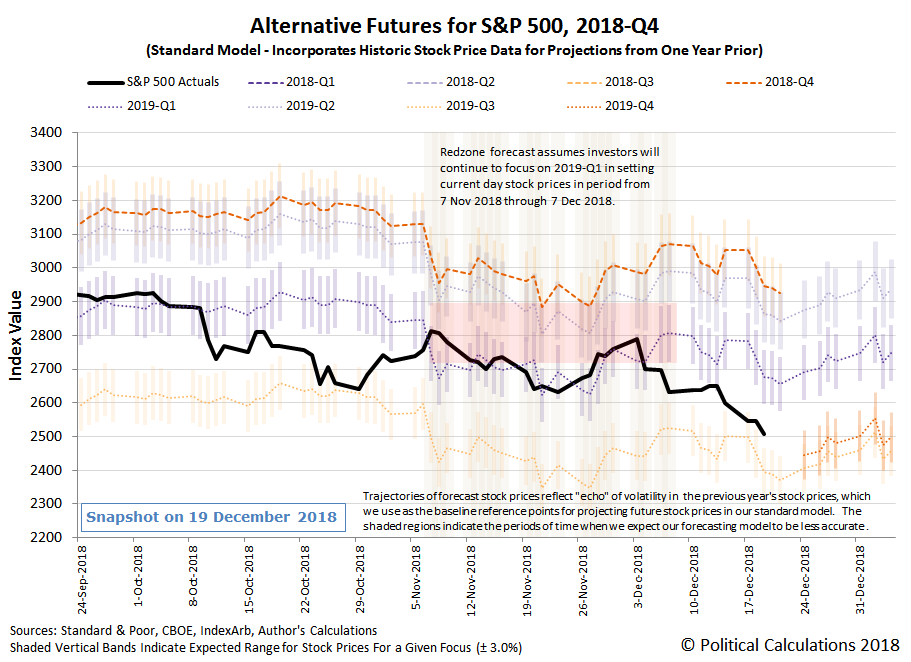

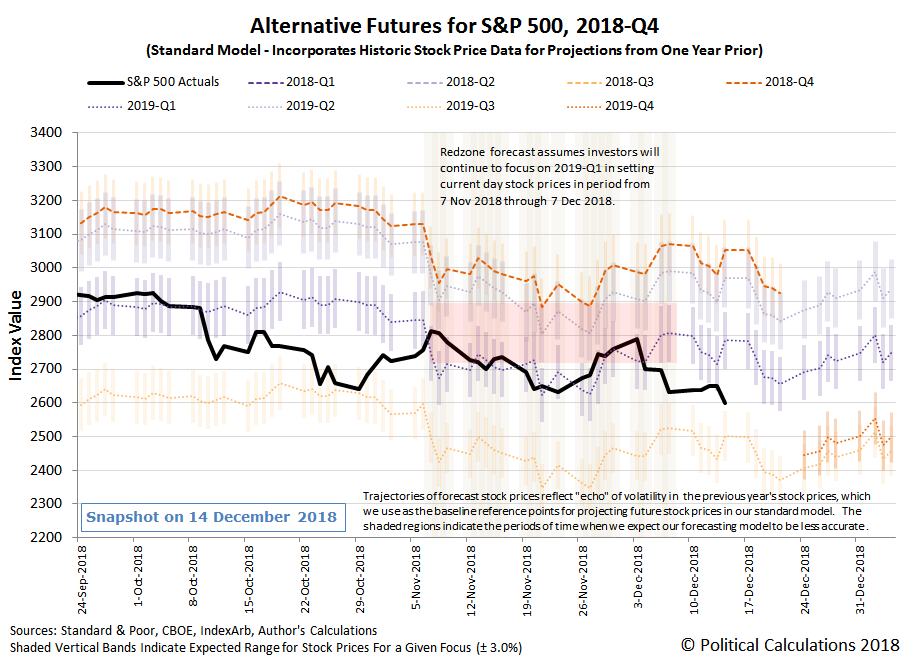

Well, market events have prompted this special edition! In falling by more than 2% on Friday, 21 December 2018, the S&P 500 finally completed its fifth Lévy flight event of 2018, as investors fully shifted their forward-looking attention from 2019-Q1 out toward the more distant future quarters of 2019Q3 and 2019Q4, where year over year dividend growth has long been projected to significantly slow.

We're now about to quote ourselves on the origin of the U.S. stock market's latest Lévy flight:

The U.S. stock market's fifth Lévy flight event of 2018 began on 4 December 2018 as investors reacted to the partial inversion of the U.S. Treasury yield curve and to New York Fed President John Williams' tone-deaf, hawkish comments promising more rate hikes well into 2019 on that date.

That's relevant today, because once again, New York Fed president John Williams' tone-deaf and hawkish comments have played a major role in sinking the S&P 500 (Index: SPX) to its lowest point in 2018, while President Trump's trade advisor Peter Navarro also contributed. Here are three key headlines and links to the stories behind them to provide context:

- Williams soothes markets, says Fed listening and could change policy

- Market not convinced after Fed’s Williams interview, says equity trader

- White House adviser says deal with China difficult without deep policy changes

ZeroHedge put together the following chart to illustrate what happened to stock prices as investors absorbed the specific things they said that drove the day's considerable volatility in the stock market.

Unless the Fed starts really listening to markets, the legacy of both Fed Chair Powell and New York Fed president Williams may lie in having needlessly pushed the U.S. economy into recession because they chose to keep the Fed's policies on autopilot.

Let's tie a bow on the major market-moving news headlines from the final full week of trading in 2018....

- Monday, 17 December 2018

- Tuesday, 18 December 2018

- Wednesday, 19 December 2018

- Thursday, 20 December 2018

- Friday, 21 December 2018

- U.S. shale producers hit the brakes on 2019 spending

- China denies rumors about not cutting taxes and fees

- Williams soothes markets, says Fed listening and could change policy

- Market not convinced after Fed’s Williams interview, says equity trader

- White House adviser says deal with China difficult without deep policy changes

- Nasdaq confirms bear market; economic worries sink Wall Street

Elsewhere, Barry Ritholtz dividend the week's major economics and markets news into six positives and seven negatives.

That’s really it for our S&P 500 chaos series until 2019 - we'll post one last time in 2018 with the Biggest Math Story of the year. Enjoy the holidays, and we’ll be back to catch up the latest major market news up in January!

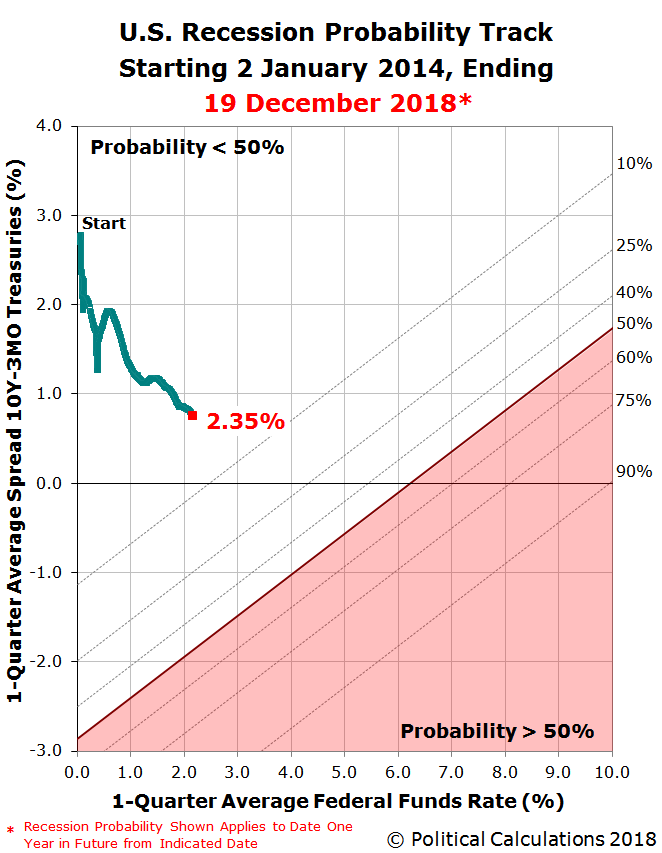

As long expected, the U.S. Federal Reserve hiked short term interest rates in the U.S. at the conclusion of its 18-19 December 2018 meeting, increasing the target range for their Federal Funds Rate by a quarter point to 2.25%-2.50%.

The risk that the U.S. economy will enter into a national recession at some time in the next twelve months now stands at 2.4%, which is up by roughly half of a percentage point since our last snapshot of the U.S. recession probability from early-November 2018. The current 2.4% probability works out to be about a 1-in-42 chance that a recession will eventually be found by the National Bureau of Economic Research to have begun at some point between 19 December 2018 and 19 December 2019, according to a model developed by Jonathan Wright of the Federal Reserve Board in 2006.

That increase from our last snapshot is roughly equally attributable to the Fed's most recent quarter point rate hikes on 26 September 2018 and to the recent flattening of the U.S. Treasury yield curve, as measured by the spread between the yields of the 10-Year and 3-Month constant maturity treasuries, which has seen the 10-Year Treasury yield decrease as the bond market has priced in increased prospects for a global economic slowdown.

The Recession Probability Track shows where these two factors have set the probability of a recession starting in the U.S. during the next 12 months.

We continue to anticipate that the probability of recession will continue to rise into 2019, partially as a consequence to today's rate hike and also because the Fed communicated that it is likely to continue hiking the Federal Funds Rate up to three times in 2019. ZeroHedge captured the market's shock:

"Everything was awesome" and then Jay Powell said...

"Some years ago, we took away the lesson that the markets were very sensitive to news about the balance sheet, so we thought carefully about how to normalize it and thought to have it on automatic pilot, and use rates to adjust to incoming data. That has been a good decision, I think, I don't see us changing that.... we don't see balance sheet runoff as creating problems"

And everything broke...

Overnight futures show hopeful buying - "surely The Fed will deliver and capitulate... for goodness sake, someone has to rescue my FANG portfolio!!??" - But The Fed did not - cutting their rate outlook by a mere one hike, with plenty still seeing 3 hikes ahead in 2019...

The market has a different perspective, where the CME Group's FedWatch tool is now anticipating no further rate hikes in 2019, and even the prospects of a rate cut in late 2019 or early 2020.

Scott Sumner provides more insight into the market's reaction:

The Fed delivered two monetary shocks today. The first occurred at 2:15pm, and caused yields on 2 and 5 -year bonds to increase. The second occurred about 30 minutes later, and caused yields on 2 and 5-year bonds to fall back, and end the day slightly lower. And yet both shocks seemed “contractionary” in some sense. Obviously there are some very subtle distinctions here, which require an understanding of Keynesian and Fisherian monetary shocks. More specifically, the first shock was Keynesian contractionary and the second was Fisherian contractionary.

At 2:15 the Fed raised its target rate as expected, and also indicated that another two rate increases are likely next year. This announcement was a bit more contractionary than expected, especially the path of rates going forward. As a result, 2 and 5-year yields rose, while 10-year yields fell on worries that the action would slow the economy, eventually leading to lower rates.

Later, the markets became increasingly worried that the Fed was not sufficiently “data dependent”, that it would plunge ahead with “quantitative tightening” and that the Fed stance on rates (IOR) would be too contractionary. Watching the press conference, I had the feeling that Powell might have wanted to be a bit stronger in emphasizing that no more rate increases are a clear possibility, but felt hemmed in by his colleagues at the Fed. But perhaps I was reading into it more than was there. In any case, Powell said “data dependent”, but didn’t really sell the markets that he was sincere, or as sincere as the market would wish. This monetary shock probably reduced NGDP growth expectations, and drove 2 and 5 year yields lower.

As for the U.S. stock market, the Fed's announced rate hike combined with Fed Chair Jerome Powell's press conference statements were sufficient to shift the forward-looking focus of investors toward 2019-Q3/2019-Q4 in setting stock prices, nearly fully completing the U.S. stock market's fifth Lévy flight event of 2018.

The U.S. stock market's fifth Lévy flight event of 2018 began on 4 December 2018 as investors reacted to the partial inversion of the U.S. Treasury yield curve and to New York Fed President John Williams' tone-deaf, hawkish comments promising more rate hikes well into 2019 on that date.

Update 8:25 AM EST: First Trust's Brian Wesbury and Robert Stein argue the stock market overreacted on Wednesday, 19 December 2018, while AQR's Cliff Asness holds that the reaction to the market's reaction is overwrought. From our perspective, that stock prices behaved as they did was predictable, where in the absence of a significant deterioration in the expectations for future dividends or a new noise event, the S&P 500 is now within several percent of its floor in the near term. We would not be surprised to see a bounce off this level, which would look like a correction to an overreaction, as investors may shift at least part of their forward-looking attention toward a different point of time in the future. The problem for investors is that the expectations for slow dividend growth associated with 2019-Q3/2019-Q4 are already long established, where they will overshadow their future outlook for some time.

Getting back to the topic of recession forecasting, it should be noted that Wright's model is based on historic data where recessions have generally started at much higher interest rates than they are today, and which also doesn't consider the additional quantitative tightening that the Fed might achieve through reducing the holdings of U.S. Treasuries on its balance sheet, where we're stretching the model's capability to assess the probability of recession in today's economic environment. It is quite possible that the model is understating the probability of recession starting in the U.S. when the Federal Funds Rate is as low as it is today, which is a subject we expect to explore further in upcoming posts in this series.

Meanwhile, if you want to predict where the recession probability track is likely to head next, please take advantage of our recession odds reckoning tool, which like our Recession Probability Track chart, is also based on Jonathan Wright's 2006 paper describing a recession forecasting method using the level of the effective Federal Funds Rate and the spread between the yields of the 10-Year and 3-Month Constant Maturity U.S. Treasuries.

It's really easy. Plug in the most recent data available, or the data that would apply for a future scenario that you would like to consider, and compare the result you get in our tool with what we've shown in the most recent chart we've presented. The links below present each of the posts in the current series since we restarted it in June 2017.

Previously on Political Calculations

- The Return of the Recession Probability Track

- U.S. Recession Probability Low After Fed's July 2017 Meeting

- U.S. Recession Probability Ticks Slightly Up After Fed Does Nothing

- Déjà Vu All Over Again for U.S. Recession Probability

- Recession Probability Ticks Slightly Up as Fed Hikes

- U.S. Recession Risk Minimal (January 2018)

- U.S. Recession Probability Risk Still Minimal

- U.S. Recession Odds Tick Slightly Upward, Remain Very Low

- The Fed Meets, Nothing Happens, Recession Risk Stays Minimal

- Fed Raises Rates, Recession Risk to Rise in Response

- 1 in 91 Chance of U.S. Recession Starting Before August 2019

- 1 in 63 Chance of U.S. Recession Starting Before September 2019

- 1 in 54 Chance of U.S. Recession Starting Before November 2019

- 1 in 42 Chance of U.S. Recession Starting Before December 2019

Labels: recession forecast

On 28 November 2018, 20 black clergy in Philadelphia announced their opposition to the continuation of the controversial tax, breaking what had been their support for Philadelphia Mayor Jim Kenney's tax program, noting its disproportionately racial impact.

Nearly two years after the city’s sugary beverage tax went into effect, 20 members of Philadelphia’s Black clergy are calling for its repeal over concerns that the revenue-generator is regressive and disproportionately taxing African Americans and the poor.

“I don’t see how the tax, as it is constructed, can really effectively do what it is intending to do. We think it needs to be repealed and reconceptualized,” said the Rev. Jay Broadnax, president of the Black Clergy of Philadelphia and Vicinity....

The Philadelphia pastor said the 1.5-cent-an-ounce levy on sugary beverages, including diet soda, was having unintended consequences by saddling people of color, the poor and senior citizens with higher grocery bills, while dips in soda sales were hurting small neighborhood business owners.

“The way that it has worked out is that it seems to be hurting more than it’s helping,” Broadnax said.

Indeed it has, which has been evident for quite some time. Last December, economic analysis firm Oxford Economics (OE) quantified their assessment of the economic impact from the unpopular tax, finding:

Overall, our models indicate an employment decline of 1,192 workers in Philadelphia as a result of the PBT, or roughly 0.14 percent of Philadelphia employment. These job losses broke out to roughly 5 percent from bottling, 25 percent from beverage trade and transport margins, and 70 percent from reduced non-beverage grocery retail. Operational data provided by bottlers suggests that this modeling actually understates true job losses, by roughly 72 jobs. The modeled job losses correspond to $80 million in lost GDP, and $54 million less labor income. This reduced economic activity results in consequent tax losses, which our modeling can estimate. Overall, we find a $4.5 million reduction in local tax revenue.

Since the Oxford Economics report was commissioned by the American Beverage Association, its findings have been challenged on just that basis by political supporters of the soda tax who decry the influence of "Big Soda". We have no affiliation with the ABA, its members, or soda tax supporters, where we can offer an objective and independent assessment of its economic impact based on up-to-date information.

The following tool updates a previous analysis we provided in June 2017 to reflect the findings of other independent economic research, specifically the following key points:

- "Overall, we find that the estimates of the impact of the tax on the consumption of added sugars from SSBs and the frequency of consuming all taxed beverages are negative but not statistically significant for children and adults", which is to say that there is no meaningful positive benefit that is "correcting" any negative externality associated with sweetened soft drink consumption that might exist. (If anything, it has contributed to creating even bigger negative externalities.)

- "The tax was fully passed through to consumers, raising prices by 1.6 cents per ounce, on average, across all taxed beverages."

In our tool, we'll limit the price increase to just the 1.5 cents-per-ounce that was directly imposed upon beverages distributed in the city for retail sale by the Phildadelphia Beverage Tax. We will also incorporate quantity data for the full 2017 calendar year, where we've estimated from other data provided by the City if Philadelphia that 8,438 million ounces of sweetened beverages would have been distributed for sale in the city without the tax, and that 5,256 million ounces were actually distributed in the city during the tax' first year in effect.

With those changes noted, here's the updated tool. If you're accessing this article on a site that republishes our RSS news feed, please click here to access a working version of the tool. If you would rather not, here is a screen shot of the results we obtained using the default data.

With the default data, our tool estimates the deadweight loss to Philadelphia's economy to be $23.8 million. This figure is a little under a third of the total $80 million economic loss projected by Oxford Economics in their methodology from data for first four months of the Philadelphia Beverage Tax being in effect.

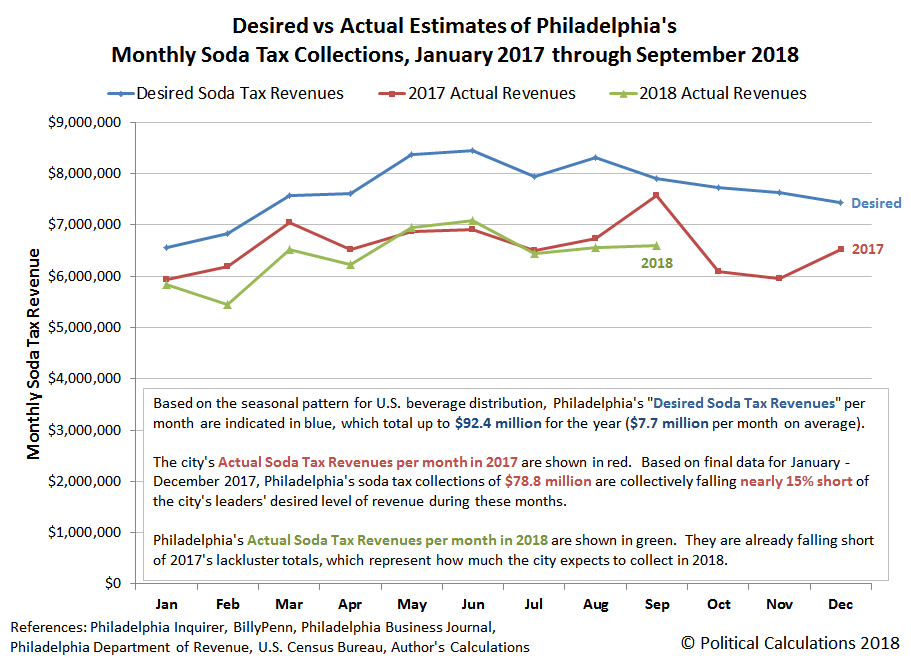

Moving the clock forward to 2018, we can confirm that Philadelphia's beverage tax collections are continuing to fall short of city lowered expectations, where we find that through October 2018, the city is cumulatively running about $3 million behind where they were at the same point of time in 2017.

Philadelphia's civic leaders therefore have less money to spend than what they were expecting, but as it happens, they're not spending much of the soda tax money that they are collecting.

How much the soda tax has raised

From the time tax it went into effect in January 2017 to the end of the most recent fiscal quarter: $137 million.

...And how much has been spent

- $101 million is sitting unspent in the general fund (74 percent of total)

- $31.7 million on Pre-K programs (23 percent)

- $3.5 million Community Schools (2.5 percent)

- $605k on Rebuild projects (less than 1 percent)

Philadelphia's new controller, Rebecca Rhynhart, is taking issue with how Philadelphia's political leaders are managing the money from its soda tax:

Philadelphia's contentious sugary beverage tax, now in its third fiscal year of collections, just became much more transparent to the public.

City Controller Rebecca Rhynhart released a searchable data set on Tuesday that provides access to all revenue and expenditures for the tax since it was enacted in January 2017....

Rhynhart had criticized the Kenney administration for keeping the revenue in the city's general fund rather than creating a segregated reserve account.

"The use of a segregated reserve account would ensure that revenue is spent only on earmarked programs and would promote transparency by making it easier to track revenue to expenses," Rhynhart's office said in a statement accompanying the data release.

Rhynhart is greatly concerned that Philadelphia's civic leaders might consider treating the money collected from the tax, which is supposed to be dedicated to funding pre-K programs, community schools, and improvements to public facilities, like a slush fund within the city's accounts. With about three-quarters of the all the soda tax money sitting in the general fund unspent, it's virtually an open invitation for it to be misappropriated.

Meanwhile, the city's old controller, Alan Butkovitz, who has recognized many of the problems that have arisen from the Philadelphia Beverage Tax, is now running for mayor, where his opposition to current Mayor Jim Kenney's pet tax project is a centerpiece of his campaign.

There's a lot that is wrong with Philadelphia's soda tax, but the bottom line is that is isn't working.

Previously on Political Calculations

We've been covering the story of Philadelphia's flawed soda tax on roughly a monthly basis from almost the very beginning, where our coverage began as something of a natural extension from one of the stories we featured as part of our Examples of Junk Science Series. The linked list below will take you through all our in-near-real-time analysis of the impact of the tax, which at this writing, has still to reach its end.

- Examples of Junk Science: Taxing Treats

- Philadelphia Soda Tax Crushes Soft Drink Sales

- The Tax Incidence and Deadweight Loss of Philadelphia's Soda Tax

- Philadelphia's Soda Tax Collections Are Falling Short

- Philly's Soda Tax Collections Continue to Fall Short of Goals

- Jobs Gained and Lost from Philadelphia's Soda Tax

- Philadelphia Soda Sales Volume Down 34% Since Tax

- Philadelphia Soda Tax to Shrink City's Economy by $20 Million

- Big Miss for Philadelphia's Beverage Tax

- Odds and Ends for Philadelphia's Soda Tax

- Legal Jeopardy for Philadelphia's Soda Tax

- Soda Tax Driving Philadelphians To Drink?

- Philadelphia Soda Tax Collections Start Fiscal Year in Deep Hole

- Philadelphia Soda Tax Collections Continues Falling Flat

- A Natural Experiment for Philadelphia's Soda Tax

- Philadelphia Soda Tax $20 Million Short with One Month to Go in First Year

- Philadelphia Soda Tax Falls 15% Short of Target

- Philadelphia Mayor Scales Back Soda Tax Ambitions

- Philadelphia Soda Tax Boosts City's Alcohol Sales

- Philadelphia Soda Tax Collections Falling Further Short in Year 2

- Philadelphia Soda Tax Underperforming Lowered Expectations

- PA Supreme Court Rules Philly Soda Tax Legal

- Philadelphians Sure Drink a Lot More Alcohol Since the City's Soda Tax Was Imposed

- Philly's Soda Tax Impact on City's Calorie Consumption

- Tax Avoidance and the Philadelphia Soda Tax

- Philadelphia Rebuild Paying Price for Soda Tax Shortfalls

- Philadelphia's Soda Tax Isn't Working

Labels: economics, food, politics, taxes, tool

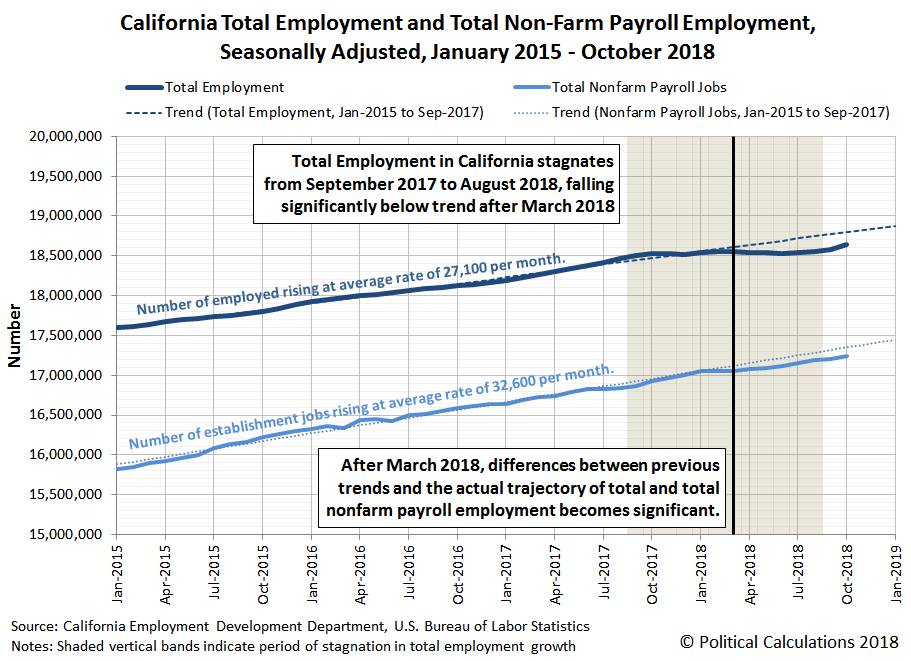

After growing at an average rate of 27,100 employed people per month from January 2015 to September 2017, California's total employment stalled out at a level just over 18.5 million in the 12 months from September 2017 to August 2018 before finally resuming an upward trajectory.

That observation started us digging into the state's employment data, where we were looking to identify distressed industries within the state, specifically looking for economic sectors that might have been losing jobs as others were gaining them, which would account for the period of stagnation.

After looking the data over, the leading candidates were the state's Mining and Logging sector(which includes Oil Extraction), the Construction sector, and California's catch-all "Other Services" economic sector. But the job numbers we saw in these industries didn't explain the full magnitude of the deviation in trend of the state's total employment level that occurred during this time.

That's when it occurred to us that we were comparing apples and oranges, where the total employment data comes from the Bureau of Labor Statistics' monthly survey of U.S. households, while the industrial employment figures represent the number of jobs counted in the BLS' monthly survey of U.S. business establishments.

As it happens, we have some experience with that topic, where top Google search result for the difference between household and establishment survey currently returns an article that we wrote several years ago.

It occurred to us that we could use the difference between the kinds of employment data collected between the two surveys to determine if the apparent stagnation in California's total employment level from September 2017 through August 2018 could be partially accounted for by changes in non-establishment employment, which could potentially explain the gap.

So we took both sets of seasonally-adjusted data and graphed them on the same chart, projecting the linear trend for each that was established in the period before the period of stagnation in the total employment data took hold in September 2017. We found something remarkable.

Both total employment and nonfarm establishment jobs in California grew steadily in the period from January 2015 through September 2017. While the total employment level began to stagnate at that point, the number of filled jobs at establishments continued to grow until January 2018, after which it began to flatten. After March 2018, both series of data deviate significantly from their established linear trends, where the trajectory of total employment data shows a bigger negative deviation than the total nonfarm payroll data.

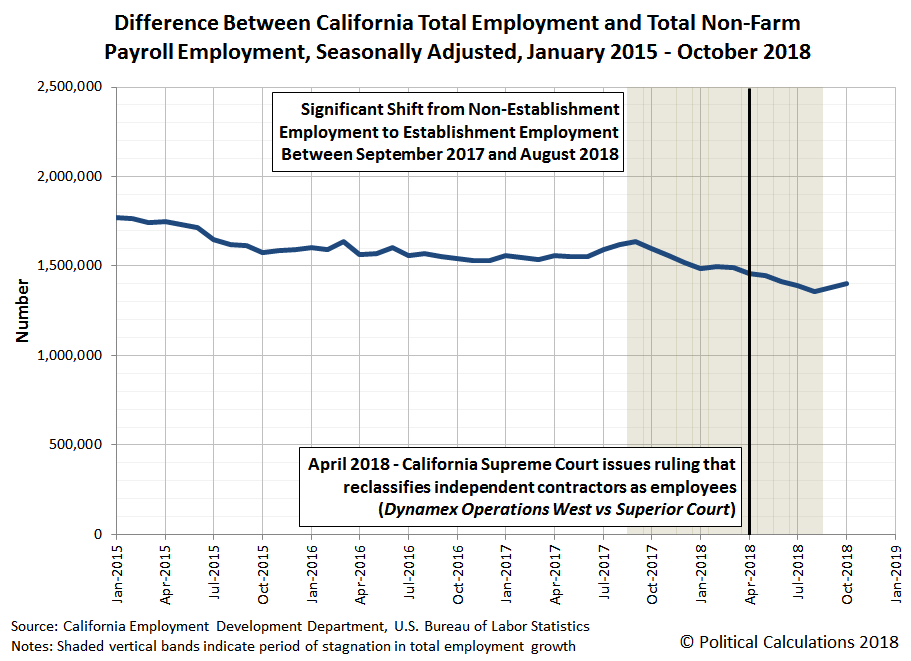

What this data tells us is that there was a notable shift, beginning in September 2017 and more notably after March 2018, where Californians employed outside of business establishments in the state shifted to become payroll employees. The following chart shows the difference between the two data series (note that this math can only produce at best a rough estimate of the change given the differences in the types of employment data involved - the two sets of data do not fit precisely together like puzzle pieces).

The chart also marks the timing of a powerful explanation for the deviation from previous trend that we observe after March 2018. In April 2018, the California Supreme Court ruled in Dynamex Operations West, Inc. vs Superior Court that many Californian employers would have to reclassify many workers who they had previously employed as independent contractors under Californian state law as regular employees, putting them on their establishment's payrolls.

The court's arbitrary ruling was expected to cause "massive disruption to California's gig-economy employers", where the significant deviation from previous trend for both total employment and total nonfarm payroll jobs after March 2018 suggests occurred.

That doesn't explain the shift that appears to have occurred from September 2017 to March 2018, but that period also roughly coincides with the peaking and subsequent decline of California's housing market that has largely been driven by the Federal Reserve's recent series of rate hikes. This sector of California's economy coincidentally employs about one out of every five independent contractors in the state, where the decline of the market may have prompted many of these Californians to stop being their own boss and start working on someone else's payroll.

Although all the employment data we've presented in this post is still subject to future revision, it does suggest that California's gig-economy did experience a court-ordered disruption during 2018. That same preliminary data also suggests that the period of adjustment may now be over, but we won't know for sure that's the case for quite some time.

Labels: jobs, real estate, recession

As we enter the final full week for trading in 2018, we find that S&P 500 (Index: SPX) investors appear to be splitting their forward-looking attention between the first and third quarters of the new year in setting today's stock prices.

We believe the reason for this split is two-fold. First, political control of the U.S. House of Representatives is changing from the Republican party to the Democrat party in 2019-Q1, which will come with a host of policy changes that can impact the outlook for businesses and the economy depending upon how far they might be expected to advance.

Second, investor concerns about the Fed's plans for future rate hikes are now at elevated levels because of the recent partial inversion of the U.S. Treasury yield curve. Further Fed rate hikes can only exacerbate that situation and increase the likelihood of a recession given the current market environment, where the Fed is expected to hike rates by a quarter point later this week. The bigger question is how many more hikes will follow in 2019?

A month ago, investors had been anticipating two rate hikes in 2019, one in March 2019 and another in September 2019, following the long anticipated hike in December 2018. Two weeks ago, investors were betting on the scenario that there would be at least one hike in the next year, coming in June 2019.

In a sign of how rapidly expectations for the future are changing, as of Friday, 14 December 2019, the CME Group's FedWatch Tool is still forecasting just one Fed rate hike in 2019, which has been pushed out into September 2019. The following image captures the FedWatch Tool's estimated probabilities for rate hikes by amount and date, which indicates that there is a greater than 50% probability that the Fed will hike its Federal Funds Rate to the 2.50-2.75% (or 250-275 basis points) range or higher at the conclusion of its 18 September 2019 meeting during 2019-Q3.

Although the red-highlighted sections of the chart indicate the rate hike occurring after the Fed's 30 October 2019 meeting (in 2019-Q4), summing the probabilities that the Federal Funds Rate hike will be 2.50%-2.75% or higher at the Fed's 18 September 2019 meeting confirms that investors are currently giving better-than-even odds of Fed rate hike at this earlier date. As we noted before, these expectations have been evolving rapidly in recent weeks, where perhaps the CME Group's tabulator has gotten a bit ahead of the curve....

That said, this is the last edition of our S&P 500 Chaos series until 2019, unless market events prompt a special edition. Otherwise, we'll return with a year-end catch-up edition in early January 2019! Until then, here are the major market-moving headlines for the week that was Week 2 of December 2019.

- Monday, 10 December 2018

- Tuesday, 11 December 2018

- Oil pares gains on threatened U.S. government shutdown

- China, U.S. discuss road map for next stage of trade talks

- Trump says 'very productive' talks with China

- White House expects China to cut car tariffs after trade call: auto executive

- S&P 500, Dow edge lower as U.S. shutdown threat, China trade in focus

- Wednesday, 12 December 2018

- Oil ends lower on Iran comments despite Libya, OPEC supply cuts

- U.S. mortgage activity hits 2-month high as interest rates fall: MBA

- China preparing plan to increase access for foreign companies: WSJ

- Exclusive: Trump says Fed shouldn't hike rates, but calls Powell 'a good man'

- Wall Street closes up, investors optimistic on China trade

- Thursday, 13 December 2018

- Friday, 14 December 2018

Barry Ritholtz has outlined the positives and negatives among the week's major economics and markets-related news over at The Big Picture.

On a more general publishing note, Political Calculations will be covering a handful of stories this week before signing off for our annual hiatus until 2019, including an update for our U.S. recession probability estimates following the Fed's meeting on Thursday and also the biggest math story of 2018 as our final post of 2019, which we haven't yet scheduled - all we know right now is "before Christmas arrives". Thank you for reading, and have a wonderful holiday season!

Welcome to the blogosphere's toolchest! Here, unlike other blogs dedicated to analyzing current events, we create easy-to-use, simple tools to do the math related to them so you can get in on the action too! If you would like to learn more about these tools, or if you would like to contribute ideas to develop for this blog, please e-mail us at:

ironman at politicalcalculations

Thanks in advance!

Closing values for previous trading day.

This site is primarily powered by:

CSS Validation

RSS Site Feed

JavaScript

The tools on this site are built using JavaScript. If you would like to learn more, one of the best free resources on the web is available at W3Schools.com.